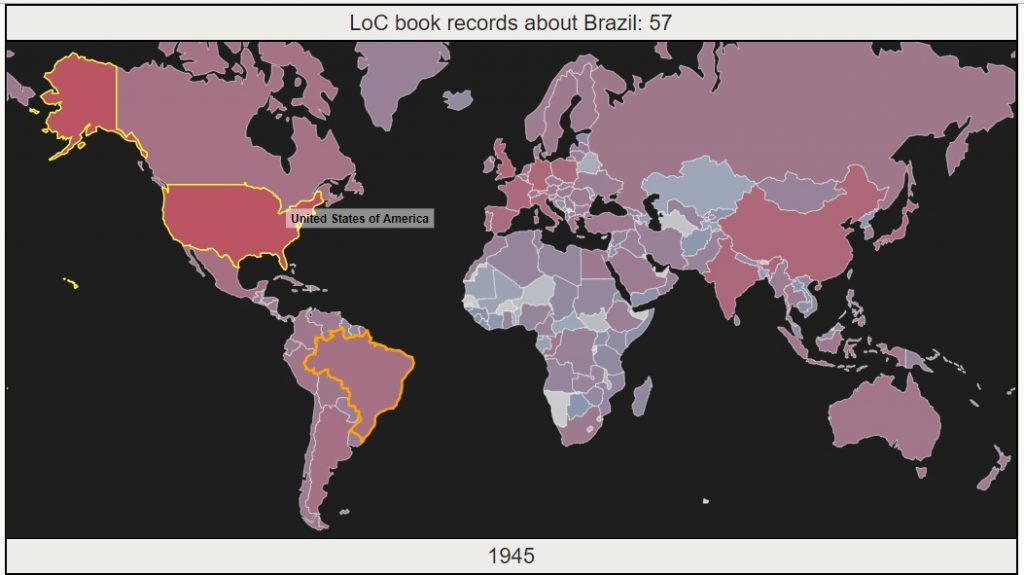

In May 2017, the US Library of Congress made the largest release of digital records in its history – metadata for over 25 million books, maps and recordings. People immediately started making some pretty cool visualizations to explore patterns in the data, or demonstrate the incredible size of the release. This page follows my process of building an animated D3 map visualization, from data cleaning to adding features. Each of the pages below covers one step in the process. Jump to the last page to explore the visualization.

- Preparing the Data – Parse massive xml record files, cache and geocode subject locations, aggregate for our visualization.

- Basic Animation – Build a D3 map that animates changing numbers of records about each country over time.

- Add a Tooltip – Add a tooltip to display country name on hovering. Select a country on click to show record counts for that country.